GPT-3 is one of the most powerful large language models available worldwide.

It was trained on a corpus of more than half a trillion words, but how many of those words are unique?

With GPT-3 being available in 46 languages and 13 different coding languages, we did the math to estimate its total vocabulary size.

After crunching the numbers, we found that GPT-3’s vocabulary size is 14,735,746 words. This high number may catch many off guard, but you must remember that GPT-3 is available in 46 languages that use many words.

Below, we will explain our math, show you how we came up with this calculation, and explore the possibilities GPT-3 enables with such a massive corpus (vocab).

This one gets… interesting and mathy.

How Big Is GPT-3s Vocabulary Size? (Our Math)

Let’s chase down an estimate on GPT-3s vocabulary size.

Before we start, let’s establish some rules.

- We’re only counting a word once, even if it contains many contextual meanings.

- We assume that GPT-3 only was able to learn 95% of any language (due to word availability)

- Coding languages don’t increase the vocab size since the keywords from a coding language are based on words from a language. For example, the “from” keyword in Python will have been scraped during English.

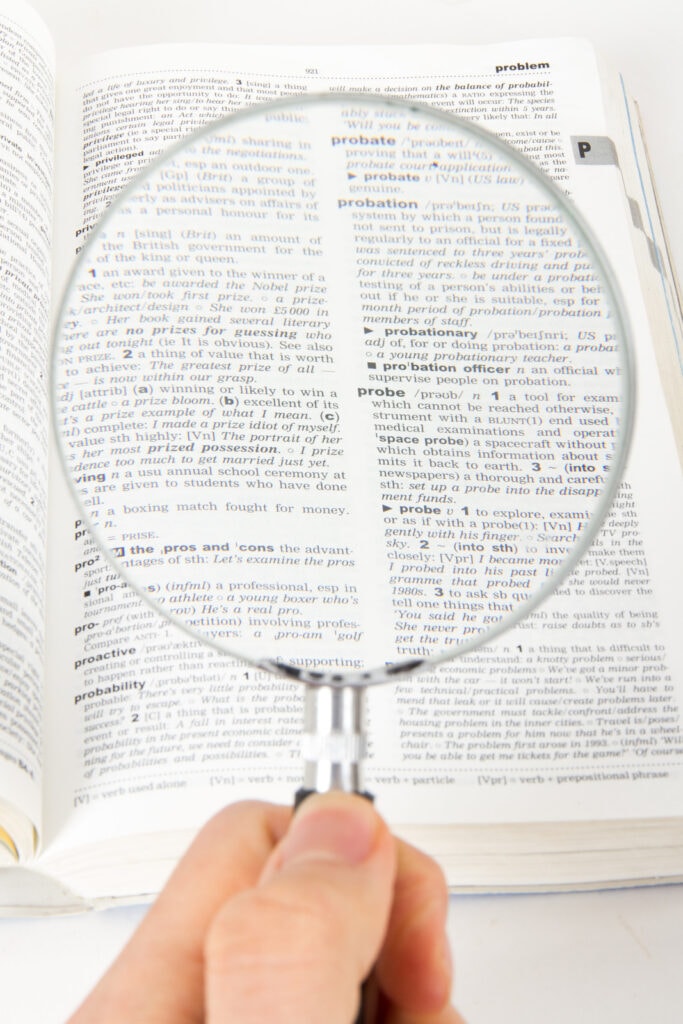

According to this blog post

The most commonly spoken languages in the world contain this many words in their dictionary.

- Korean: 1,100,373

- Japanese: 500,000

- Italian: 260,000

- English: 171,476

- Russian: 150,000

- Spanish: 93,000

- Chinese: 85,568

If you take the average of these, we see that the average language has around 337,200 words.

…And OpenAI has told us that GPT-3 is available in 46 languages.

With some quick math, we multiply 337,200 words (Average words in a language) by 46 (the number of natural languages in GPT-3), and we get 15,511,311.

Remember what we said above about how this NLP deep learning model was trained?

Since the neural network wasn’t trained on dictionaries and was trained on human-like text all over the internet, we assume that only 95% of each language was available on the internet.

So, we take our number and multiply it by .95.

15,511,311 * .95 = 14,735,746

We believe GPT-3 has a vocabulary size of about 14,735,746.

Why Do LLM (Large Language Models) Need a Large Vocabulary?

Large Language Models can be effective without large vocabularies. They just need to know how to use the words.

Do you know what the word “vulpine” means?

Me neither, but it is a word in the English dictionary.

(if you’re interested in the definition, it means of, relating to, or similar to a fox – but that is not the point)

The point is that most languages can be effectively boiled down to a small percentage of their words for general comprehension.

However, GPT-3’s vocab is so vast because it is available in 46 different languages, allowing them to draw on a much more extensive range of words and phrases.

If GPT-3 was just an English-based model, GPT-3 could still be incredibly powerful with a vocabulary size of just 50,000 words, nearly 1.5x the amount that the average English-speaking human knows.

The main benefit here is that the model can capture nuances and variations in the language that would otherwise be impossible with a smaller vocabulary size.

Furthermore, having such large vocabularies also allows large language models to process multiple languages simultaneously, which is invaluable when dealing with multilingual projects.

But realistically, there’s no way to keep the vocab size down.

When scraping the internet, you’ll get text data from all over, ranging from highly sophisticated speaking to slang.

Why Wasn’t GPT-3 Trained With More Words?

GPT-3 wasn’t trained with more unique words because it would not have made the model any better.

This was because of two reasons:

The first reason is they didn’t need them. GPT-3, as a model, is trying to be human-like, not dictionary-like.

OpenAI understands that emphasizing context with its 175 billion parameters is much more important for creating good embeddings and output than wasting training time on words you and I don’t understand.

Many words in any language are rarely spoken or written, so it would have been a waste of time and money to train the model on them.

Instead, GPT-3 focused on learning the most useful, commonly used words to achieve its purpose of mimicking human speech.

The second, and maybe most important reason, is that training models at the level GPT-3 is playing at is expensive.

As a machine learning engineer, I can give you some insights.

Spending loads of time on that final 1-5 percent isn’t usually worth it.

For this reason, (I assume) the developers and engineers decided to limit their focus to the essential words necessary for GPT-3 to function as intended.

This makes sense, as they didn’t include the dictionary in their training set and emphasized websites and Wikipedia (human written text).

Would More Words Have Made GPT-3 Better?

Adding more unique words to GPT-3’s dataset would not have made a difference, as the total text size of the data it was trained on was only half a terabyte.

Considering the massive neural network architecture of GPT-3, this was because the training was expensive and lengthy.

If I were OpenAI, building out a natural language processing data set, I’d emphasize adding more human-spoken data instead of unique words.

This would be the most cost-efficient way to improve GPT-3 instead of stuffing it with words you or I have no idea what they mean.

You Don’t Think Coding Languages Increases Vocabulary Size?

Coding languages possibly increased the vocabulary size, but it would probably be 5-10 for each coding language.

Since GPT-3 only supports 13 different coding languages, the 130 words would not make a difference in the vocab size.

Many have emailed and argued that you could create your own variable names in coding languages, which could increase the vocabulary size.

While I think that’s possible, I bet these custom parameter names were filtered out during the creation of the dataset, as you wouldn’t want variable names popping up in general conversation.

But who knows with that number of parameters.

Other Articles In Our GPT-3 Series:

GPT-3 is pretty confusing, to combat this, we have a full-fledged series that will help you understand it at a deeper level.

Those articles can be found here:

- Is GPT-3 Deterministic?

- Does Gpt-3 Plagiarize?

- Stop Sequence GPT-3

- Is GPT-3 Self-Aware?

- GPT-3 For Text Classification

- GPT-3 vs. Bloom

- GPT-3 For Finance

- Does GPT-3 Have Emotions?